Nvidia’s RTX 2080 benchmarks vs GTX 1080: Misleading Stats?

Nvidia have released the GeForce RTX 2080 benchmarks, and it’s surrounded by a lot of not necessarily misinformation but different ways of interpreting the results. In this post, we take a close up look at the performance numbers and try to clear things up for you so that you can temper your expectations about exactly what Nvidia is releasing here.

Nvidia RTX 2080 vs GTX 1080 Performance Analysis

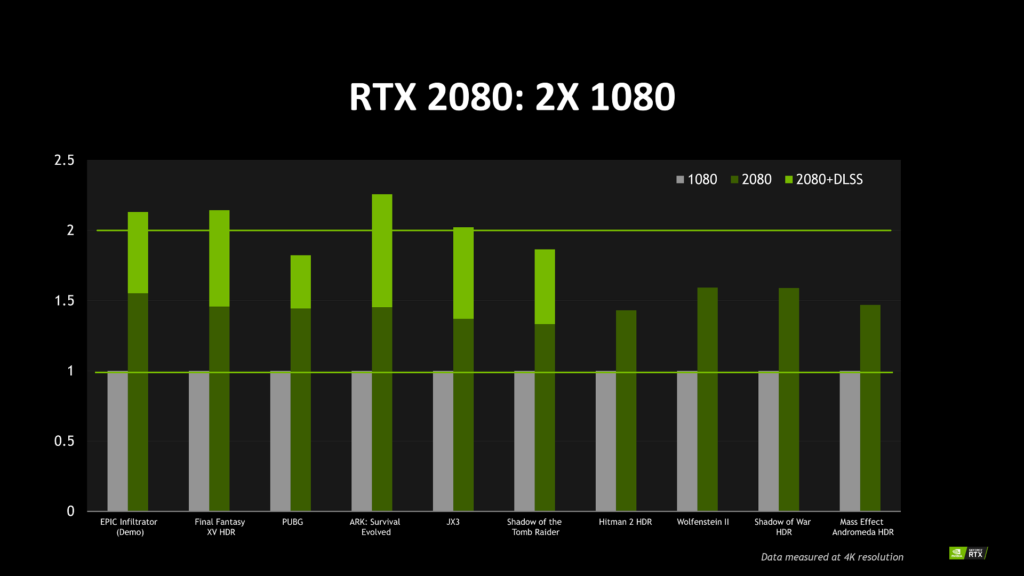

The green team recently posted two images showcasing the performance of their next-generation Turing based RTX 2080 card. One of those images had actual numbers attached to it in terms of frame rates. The other one was, however, kind of misleading, and that’s not because it looked so but the way the chart was set up, making a lot of people assume things that are not potentially there.

According to this very chart, Nvidia’s RTX 2080 is equal to two times the performance of a single GTX 1080 at 4K, and that is well and fine if true, but, unfortunately that doesn’t seem to be the case.

Nvidia is showing that the RTX 2080 is 2x to roughly 2.5x faster than the 1080 in games that support Turing’s rather cool Deep Learning Super Sampling tech, or DLSS (the light green bar). Some people have taken this to mean that DLSS automatically gives you free performance which wouldn’t be the case.

A graphical option that is built into a video game is not going to give you free performance. What it can do is take up some of the resources that GPU is allocating to the anti-aliasing, then put them on the tensor cores and use the tensor cores for processing all of that.

It basically amounts to the same thing as turning off anti-aliasing for the GTX 1080, which I can nearly guarantee they did not do for this comparison. With deep learning algorithms, however, you turn the AA solution off and get much better performance because it’s offloaded to the tensor cores and not the GPU core.

DLSS doesn’t give you free performance whatsoever. You can see a similar jump in performance if you turn anti-aliasing off on the GTX 1080. Disabling the feature is going to free up resources no matter what.

As it appears, tensor cores can process DLSS fast enough that it gives some performance increase. But, it’s not necessarily a fair competition to show something that has DLSS versus the one that is running an anti-aliasing. There’s a feature that only one card has and that requires support from developer to enable.

Nvidia could have upped that anti-aliasing at 4K to something like 8x, and achieve even better performance against the GTX 1080. But, is it exactly an apples-to-apples comparison? Of course not.

Conclusion

To put it simply, the information Nvidia has posted isn’t comparable. It’s like deliberately comparing two wrong cards. Also, it’s unclear what settings they used to achieve these performance numbers, and what kind of frame rates that equates to.

At least, with 10-series launch, they showed same games tested at same settings versus 10-series and the 9-series GeForce cards. However, with this series, they don’t seem to want to do that which is concerning to me.

I am not saying the 20-series won’t perform well, but it feels like Nvidia is trying to hide things. That’s because they are not giving us directly comparable performance numbers as they did with the 10-series cards. The debut of the RTX 2080 mostly gives us an impression of Nvidia attempting to justify the price increases over last generation.

I don’t want to come across as an Nvidia hater because I absolutely love GeForce cards, and I actually think that the RTX 2080 is going to be a great gaming card. It’s definitely going to beat the GTX 1080 and might even beat the 1080 Ti, but the performance numbers that Nvidia is putting out there don’t tell us anything meaningful.

All this to say that don’t buy anything until reviews come out. There’s not enough information available and the information that is coming out isn’t clear enough for people to make informed decisions about exactly what they are getting, and that’s the case with these RTX 2080 benchmarks here too.